Introduction

Welcome to Akigi Dev Journal!

This is where I journal about my experience building my game Akigi.

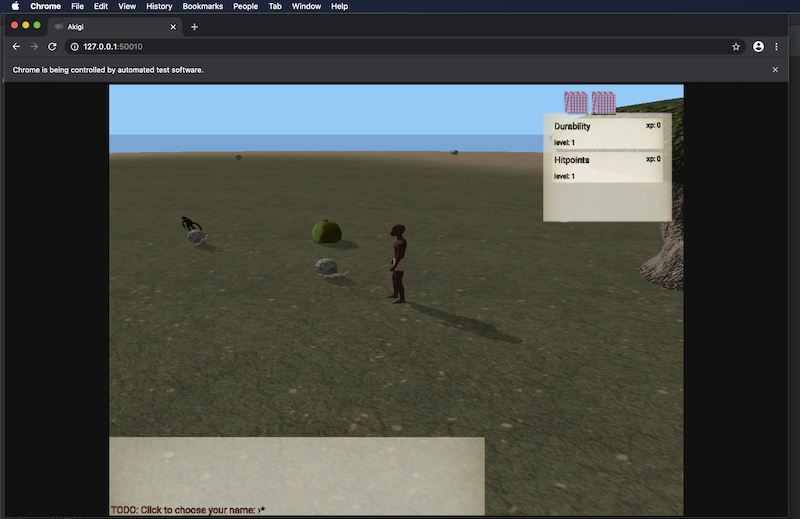

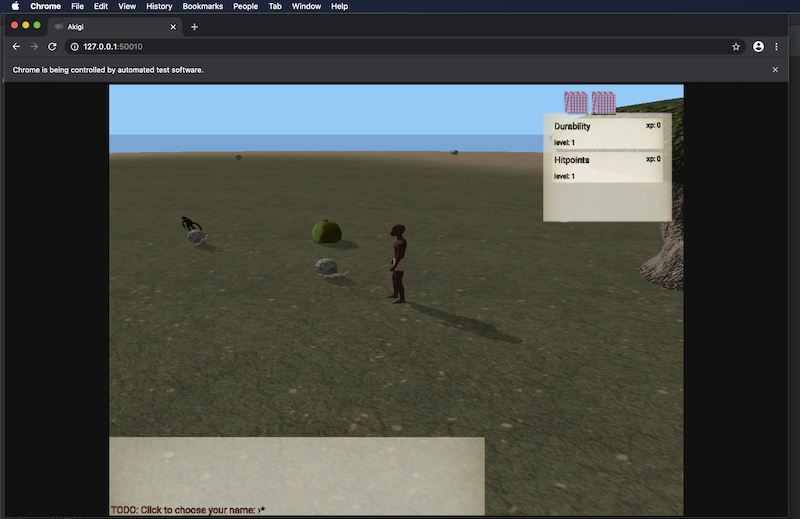

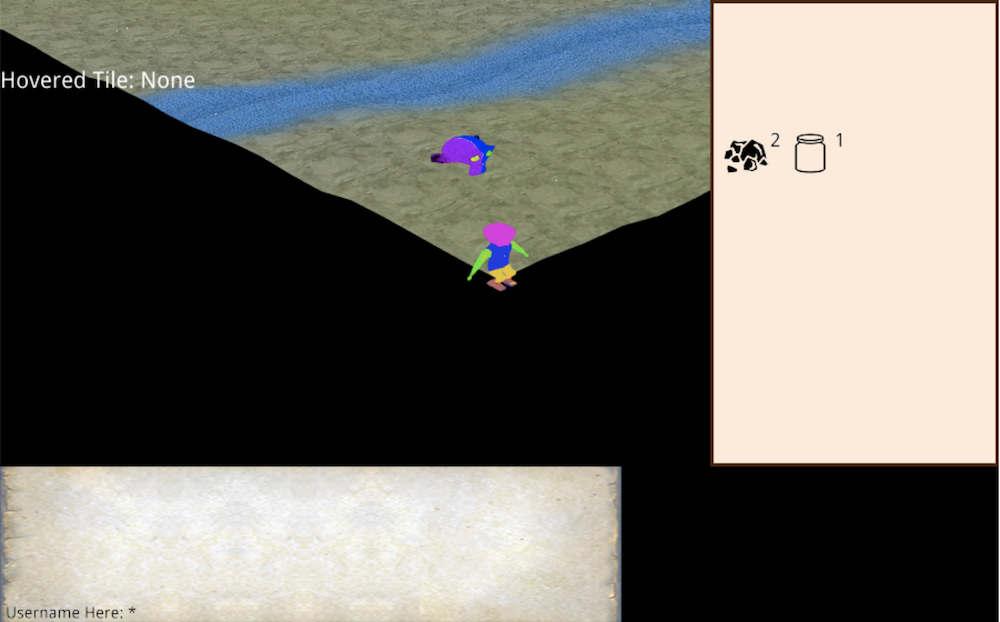

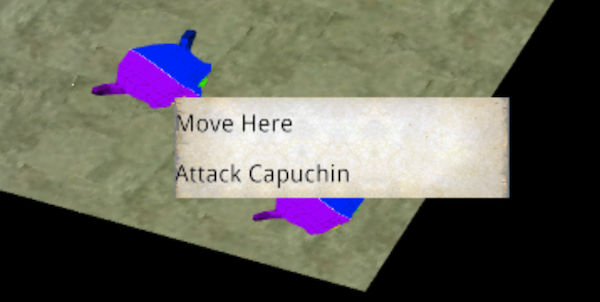

The multiplayer online world of Akigi.

I'm building Akigi alone using a game engine that I'm writing from scratch, which has me very excited but also comes with its fair share of challenges.

I write in my dev journal every week. Feel free to tag along for the ride.

Format

I write about my progress and struggles on both the game and business side of Akigi.

I post a journal entry every Sunday evening EST.

I include a transparent business and financial update on the first post of every month.

On any given week I might share words, images, videos, charts, or anything else that best communicates the progress and struggles.

Writing Approach

I keep little bullet point notes throughout the week so that I don't forget about things that I want to write more about.

Then at the end of the week I'll write the journal entry and then publish it.

I give it two or three editing passes before publishing.

Don't expect world-class editing or cohesion. It's very much a lightly polished raw dump of implementation details or problems or progress that I found interesting that week.

Code

The core Akigi repository is closed source.

Some of the libraries that power different aspects of Akigi are open source and can be found on my github. I'll eventually make a list of these libraries somewhere.

Akigi's servers, clients, website and tooling are written in Rust.

Comments and Questions

Shoot me an email!

frankie.nwafili@gmail.com

Cheers!

120 - Texture Asset Compilation

May 23, 2021

This week I added some tests for creating and update textures at different mipmap levels and

got them passing in the MetalRenderer. I will need to circle back and get them passing in the

WebGpuRenderer as well.

Now that the new runtime texture atlas allocation process is working, I need to update the asset compilation process to produce textures that can be used by the new system.

The old texture asset compilation code generated textures atlases, but I now instead need to generate individual texture files.

Also, the old process was a binary that had code for compiling many different kinds of assets, whereas ever since 088 new asset compilation is introduced through a plugin system where each plugin is focused on a particular kind of asset.

So I'm creating new asset compilation plugins to handle all of the game's texture asset compilation needs.

After that, I should be able to run the game and have it working with the new runtime texture allocation.

Other Notes / Progress

- Still moving a bit slowly, but working on a plan to better manage my time.

Next Journal Entry

By the next journal entry I want to finish porting all of the old texture asset compilation code over to the new plugin system.

Well Wishes,

- CFN

120 - Texture Asset Compilation

May 23, 2021

This week I added some tests for creating and update textures at different mipmap levels and

got them passing in the MetalRenderer. I will need to circle back and get them passing in the

WebGpuRenderer as well.

Now that the new runtime texture atlas allocation process is working, I need to update the asset compilation process to produce textures that can be used by the new system.

The old texture asset compilation code generated textures atlases, but I now instead need to generate individual texture files.

Also, the old process was a binary that had code for compiling many different kinds of assets, whereas ever since 088 new asset compilation is introduced through a plugin system where each plugin is focused on a particular kind of asset.

So I'm creating new asset compilation plugins to handle all of the game's texture asset compilation needs.

After that, I should be able to run the game and have it working with the new runtime texture allocation.

Other Notes / Progress

- Still moving a bit slowly, but working on a plan to better manage my time.

Next Journal Entry

By the next journal entry I want to finish porting all of the old texture asset compilation code over to the new plugin system.

Well Wishes,

- CFN

119 - Better Time Management

May 16, 2021

Last week I decided to abandon WebGL in favor of WebGPU and I feel like I have a new lease on life.

I'm already noticing that while working on rendering abstractions I am now more concerned with and thoughtful about the future, as opposed to being too weighed down by trying to design for WebGL and not having mental space to think about much else.

I refactored a bit of my renderer-test test suite to make it easier to test the contents of specific

textures after running a RenderGraph.

I now need to build on top of this new foundation to add some tests for modifying and copying between GPU textures at different mip map levels.

After that I need to update the old test asset compilation process to generate artifacts that are compatible with the new runtime texture allocation system.

From there I will kept some remaining test cases passing and then finally get runtime texture allocation merged.

Other Notes / Progress

- I've been working on releasing some unrelated software that I'm licensing to another company, and I have been finding it difficult to balance working on Akigi with getting that other software done. This week I will be setting aside time to think through how to better manage my time between these two projects.

Next Journal Entry

I'm a broken record at this point. I need to finish this runtime texture allocation, but honestly my main priority this week is to make a plan for how to better balance my time so that I get back to making consistent progress on Akigi and the engine daily.

Well Wishes,

- CFN

118 - Goodbye WebGL

May 09, 2021

This week I continued working on the runtime texture allocator.

I made more progress on the handling of texture groups problems that I mentioned in 117.

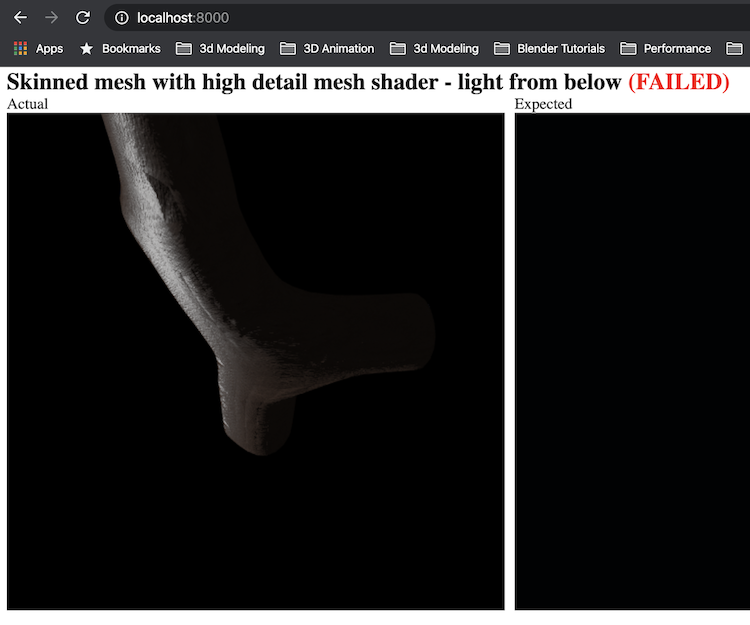

I've begun working on a couple of new renderer conformance tests to ensure that renderer

implementations such as the MetalRenderer and the WebGpuRenderer can properly copy

bytes between textures.

I also made the decision to drop support for WebGL and only support WebGPU for the web.

Moving Away from WebGL

Ever since I first heard of WebGPU a couple of years ago I became excited about the potential to one day stop using WebGL. My code base and this dev journal are littered with dozens of comments to the tune of "we have to do things in this unfortunate way because of WebGL".

I've had to just roll with that since there is no other graphics API for the web that is supported in the major browsers, and it seems like WebGPU won't be well supported until 2022 or 2023.

The straw that broke the camel's back was not being able to read directly from a texture or copy between textures in WebGL. I found out that you need to use framebuffers as intermediaries for reading and copying.

Granted, this pales in comparison to what I had to do to support tiling textures in 072, but for me this was the final straw.

Going forwards I will no longer support WebGL as a backend for the game's rendering.

Instead, I will use WebGPU on the web and just display a modal for now for users that don't have it enabled letting them know how they can enable it.

Over the next year or two that means that way fewer people will end up trying the game, but that's fine because in reality I have a year or two of work before the game is worth trying in the first place.

I'm excited about taking this step. There are a number of graphics features such as texture arrays that I haven't been using since they don't work in WebGL that I can now start to make use of.

I think that the engine has a bright future, and this decision will bring me closer to that future. I just need to make due with the lack of WebGPU support in modern browsers in the near term.

Other Notes / Progress

- While working on this I pulled our some of my conformance testing code into a new crate called conformer.

Next Journal Entry

My first priority is implementing a couple bits of the render graph that the runtime texture allocation needs to make use of. Namely copying subtexture regions between textures.

After that I will switch the game from using the WebGlRenderer on the web to using the

WebGpuRenderer.

This switch in and of itself should be fairly straightforwards since the game is designed to

work with anything that implements my Renderer trait.

Still, there will be a good bit of work to do to see the game running on top of WebGPU since

my WebGpuRenderer currently only passes a small fraction of my renderer test suite.

I'm looking forwards to getting passed this hurdle since the modern graphics APIs like Metal and WebGPU are significantly better to work with than older APIs like WebGL.

There will be some pain over the next year or so while browsers don't yet support WebGPU, but once the major browsers support it I will be in a spectacular position.

I just need to ride it out until then and be okay with way fewer people trying out the game in the meantime, since virtually no one will have WebGPU enabled and very few will elect to enable it.

It's fine though. WebGL was really holding me back from diving in to some more advanced features that I want to implement but would have been either a pain or impossible with WebGL.

Well Wishes,

- CFN

117 - Runtime Texture Groups

May 2, 2021

The runtime texture atlas allocator saga continues.

Towards the beginning of the week I added a method to rectangle-pack to allow the freeing of placed rectangles.

This gives me the ability to de-allocate textures by removing their corresponding rectangle

within the engine's RectanglePackAllocator.

Over time I plan to add more allocators to support different kinds of allocation strategies, such as allocating an atlas that I know will contain subtextures that are all the exact same size.

For now, however, the RectanglePackAllocator is the only implemented allocator.

After this I started working one of the more complex parts of the runtime texture allocation process, handling grouped textures.

Handling Texture Groups

Certain textures need to always be placed in the same texture atlas as other textures. For example, a base color texture for a physically-based texture needs to always be in the same atlas as the corresponding normal map.

To achieve this, I'm tracking the atlas that the first member of a group gets placed in.

When subsequent members of a texture group are being placed, they all get placed into that same atlas.

If we try to place a group member and it can't fit, we move all of the members of the group to a different atlas and then place the new texture into that atlas.

In this way, we ensure that textures in the same group are always in the same atlas regardless of whether or not we download and buffer them at the same time and regardless of the order that we buffer them.

Monthly Finances

The finances for April 2021 were:

| item | cost / earning |

|---|---|

| revenue | + $4.99 |

| Stripe fees | - $0.44 |

| aws | - $218.22 |

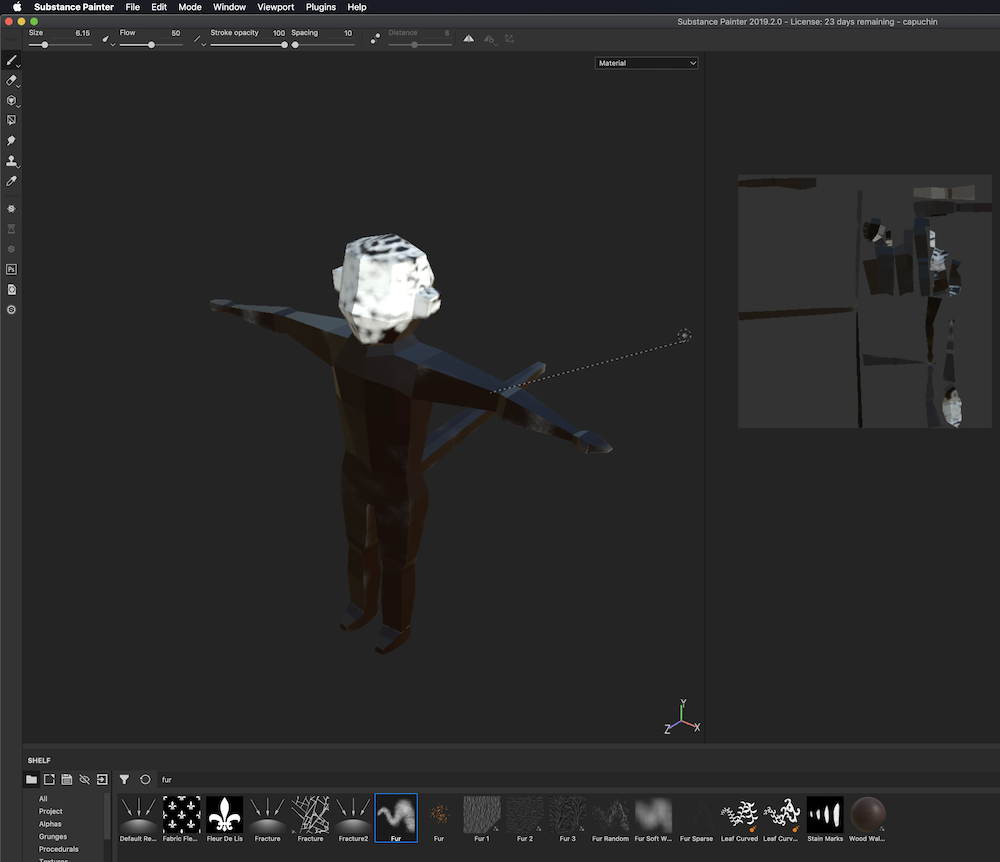

| adobe substance | - $19.90 |

| GitHub | - $9.00 |

| adobe photoshop | - $10.65 |

| --- | --- |

| total | - $253.22 |

Other Notes / Progress

- Started working on a method in rectangle-pack to coalesce freed rectangles.

Next Journal Entry

I'm going to continue working on the runtime texture allocation. Things are moving a little slowly since I've been busy writing other software in order to bring in more money in the near term, but day by day I am getting better at balancing all of my efforts.

Well Wishes,

- CFN

116 - Texture Atlas Overhaul

April 25, 2021

After a few weeks in a row of barely getting any Akigi work done while trying to tie off other unrelated loose ends, I got back on task and started to get warmed up this week.

What started off in 112 as a work stream to gradually introduce a runtime texture atlas allocation strategy in order to replace the current process of creating atlases in an offline asset compilation step has now evolved into just ripping off the bandaid all at once and moving all of my texture atlas usage to use run time allocated textures.

This will involve refactoring the texture related parts of my asset compilation process now that I no longer need to generate texture atlases at asset compile time.

One of the main reasons for moving to run time allocation is that it is much more space efficient. It isn't possible to know when at asset compile time which textures will be needed at any given time since it all depends on where a player is in the world and what other players are around.

So, by dynamically downloading only the textures that we need and placing them into atlases at run time we are able to ensure that we're only downloading and buffering textures that are actually using.

In the future I'll implement a deallocation strategy to free up space for textures that have not been used in a while. I'll also at some point think through de-fragmentation strategies.

The reason that I'm migrating everything now instead of slowly over time like I originally planned to is that doing it all at once will mean that I won't have to maintain and deal with the old code.

Implementation

On the CPU side I'm using lightweight representations of texture atlases in order to keep track of which textures are placed in which atlases.

#![allow(unused_variables)] fn main() { /// Keeps track of textures allocated on the GPU during runtime, along with the used and free space /// within each texture atlas. /// This allows us to use a few textures on the GPU to store many different textures. /// /// The VirtualTextureAtlasesResource serves as a 2d focused allocator. #[derive(Debug)] pub struct VirtualTextureAtlasesResource { next_atlas_id: VirtualTextureAtlasId, virtual_atlases: HashMap<VirtualTextureAtlasId, VirtualTextureAtlas>, // TODO: Perhaps HashMap<SubtextureId, (VirtualTextureAtlasId, SubtextureLocation) // to avoid the extra indirection when looking up subtextures. // TODO: Yeah, this will need other information such as the GroupId of the texture so that // we can place it with other group mates in the future. subtexture_to_atlas: HashMap<SubTextureId, VirtualTextureAtlasId>, } }

No actual texture data is stored in the VirtualTextureAtlasesResource, just the sizes of the atlases and

placed subtextures.

A VirtualTextureAtlas looks like this:

#![allow(unused_variables)] fn main() { #[allow(missing_docs)] pub type BoxedVirtualTextureAtlasAllocator = Box<dyn VirtualTextureAtlasAllocator + Send + Sync>; /// Corresponds to a texture on the GPU. #[derive(Debug)] pub struct VirtualTextureAtlas { size: u32, allocator: BoxedVirtualTextureAtlasAllocator, mipmapped: bool, } }

Different texture atlases are able to use different allocation strategies by using a different VirtualTextureAtlasAllocator

under the hood.

For example, if an atlas is meant to hold textures that will always be the same size you might use a much simpler allocator than if you need to be able to allocate and deallocate textures of unpredictable sizes.

Let Go Engine will ship with a few commonly useful allocators, but anyone can implement the VirtualTextureAtlasAllocator trait

themselves for more custom approaches.

There are a number of complex cases that need to be handled by the runtime texture allocation logic.

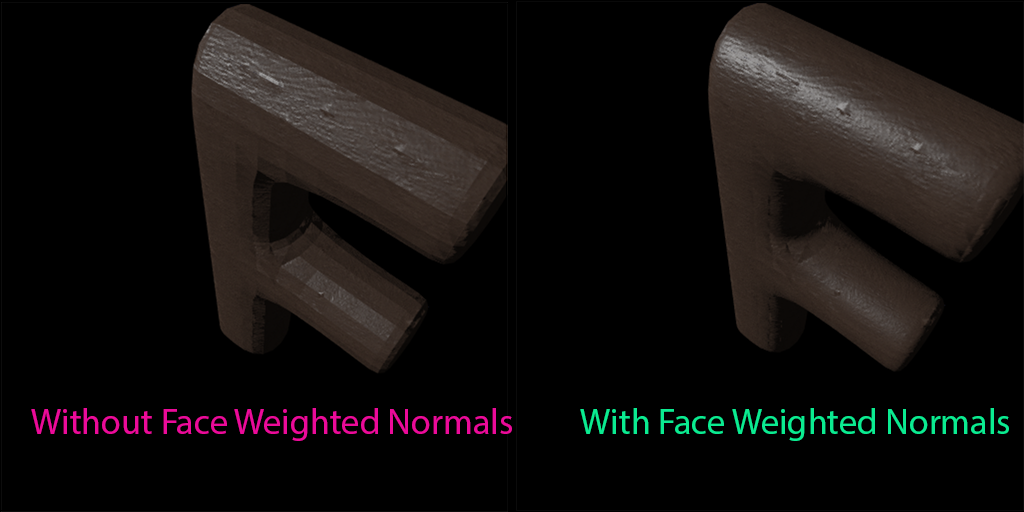

For example, some textures need to be in the same atlas as other textures. One example of this is with physically-based rendering textures, where you'll typically want your base color, roughness, metallic and normal textures all in the same atlas.

Well, a more modern approach is to just use texture arrays for PBR textures and not use an atlas at all, but I am currently supporting WebGL until WebGPU is supported in Chrome by default so I need to deal with a bit more complexity until then.

Mipmaps

As part of the work on how textures are handled, I'm also moving towards generating all mip levels myself at asset compile time and then downloading all of them at runtime.

WebGL allows you to automatically generate mipmaps, but modern graphics APIs do not. So while I'm in the mode of improving how textures are handled I decided to take care of generating my own mipmaps. This is actually fairly simple, I just need to resize the texture a bunch of times to half the previous size.

In this first implementation I will just serialize all of the PNGs for a texture's mip levels into one file that gets downloaded at runtime, but in the future I will need a smarter approach since depending on a users settings they might not need the most detailed mip levels possible.

I'll worry about that later though, handling that shouldn't have much of an on the overall design.

Other Notes / Progress

-

Learning some Swift in order to build the iOS portion of an application that I'm licensing to another company. I'm expecting that picking up a new modern language will end up making me a better Rust programmer since I will be exposed to even more programming ideas and approaches.

-

Getting started on adding support for coalescing freed bins to rectangle-pack. This will power runtime deallocation within Akigi.

Next Journal Entry

By the next journal entry I plan to finish implementing runtime texture allocation and deallocation, and be a good way through removing my existing compile time texture atlas code in favor of instead preparing the textures to be runtime allocated.

Well Wishes,

- CFN

116 - Texture Atlas Overhaul

April 25, 2021

After a few weeks in a row of barely getting any Akigi work done while trying to tie off other unrelated loose ends, I got back on task and started to get warmed up this week.

What started off in 112 as a work stream to gradually introduce a runtime texture atlas allocation strategy in order to replace the current process of creating atlases in an offline asset compilation step has now evolved into just ripping off the bandaid all at once and moving all of my texture atlas usage to use run time allocated textures.

This will involve refactoring the texture related parts of my asset compilation process now that I no longer need to generate texture atlases at asset compile time.

One of the main reasons for moving to run time allocation is that it is much more space efficient. It isn't possible to know when at asset compile time which textures will be needed at any given time since it all depends on where a player is in the world and what other players are around.

So, by dynamically downloading only the textures that we need and placing them into atlases at run time we are able to ensure that we're only downloading and buffering textures that are actually using.

In the future I'll implement a deallocation strategy to free up space for textures that have not been used in a while. I'll also at some point think through de-fragmentation strategies.

The reason that I'm migrating everything now instead of slowly over time like I originally planned to is that doing it all at once will mean that I won't have to maintain and deal with the old code.

Implementation

On the CPU side I'm using lightweight representations of texture atlases in order to keep track of which textures are placed in which atlases.

#![allow(unused_variables)] fn main() { /// Keeps track of textures allocated on the GPU during runtime, along with the used and free space /// within each texture atlas. /// This allows us to use a few textures on the GPU to store many different textures. /// /// The VirtualTextureAtlasesResource serves as a 2d focused allocator. #[derive(Debug)] pub struct VirtualTextureAtlasesResource { next_atlas_id: VirtualTextureAtlasId, virtual_atlases: HashMap<VirtualTextureAtlasId, VirtualTextureAtlas>, // TODO: Perhaps HashMap<SubtextureId, (VirtualTextureAtlasId, SubtextureLocation) // to avoid the extra indirection when looking up subtextures. // TODO: Yeah, this will need other information such as the GroupId of the texture so that // we can place it with other group mates in the future. subtexture_to_atlas: HashMap<SubTextureId, VirtualTextureAtlasId>, } }

No actual texture data is stored in the VirtualTextureAtlasesResource, just the sizes of the atlases and

placed subtextures.

A VirtualTextureAtlas looks like this:

#![allow(unused_variables)] fn main() { #[allow(missing_docs)] pub type BoxedVirtualTextureAtlasAllocator = Box<dyn VirtualTextureAtlasAllocator + Send + Sync>; /// Corresponds to a texture on the GPU. #[derive(Debug)] pub struct VirtualTextureAtlas { size: u32, allocator: BoxedVirtualTextureAtlasAllocator, mipmapped: bool, } }

Different texture atlases are able to use different allocation strategies by using a different VirtualTextureAtlasAllocator

under the hood.

For example, if an atlas is meant to hold textures that will always be the same size you might use a much simpler allocator than if you need to be able to allocate and deallocate textures of unpredictable sizes.

Let Go Engine will ship with a few commonly useful allocators, but anyone can implement the VirtualTextureAtlasAllocator trait

themselves for more custom approaches.

There are a number of complex cases that need to be handled by the runtime texture allocation logic.

For example, some textures need to be in the same atlas as other textures. One example of this is with physically-based rendering textures, where you'll typically want your base color, roughness, metallic and normal textures all in the same atlas.

Well, a more modern approach is to just use texture arrays for PBR textures and not use an atlas at all, but I am currently supporting WebGL until WebGPU is supported in Chrome by default so I need to deal with a bit more complexity until then.

Mipmaps

As part of the work on how textures are handled, I'm also moving towards generating all mip levels myself at asset compile time and then downloading all of them at runtime.

WebGL allows you to automatically generate mipmaps, but modern graphics APIs do not. So while I'm in the mode of improving how textures are handled I decided to take care of generating my own mipmaps. This is actually fairly simple, I just need to resize the texture a bunch of times to half the previous size.

In this first implementation I will just serialize all of the PNGs for a texture's mip levels into one file that gets downloaded at runtime, but in the future I will need a smarter approach since depending on a users settings they might not need the most detailed mip levels possible.

I'll worry about that later though, handling that shouldn't have much of an on the overall design.

Other Notes / Progress

-

Learning some Swift in order to build the iOS portion of an application that I'm licensing to another company. I'm expecting that picking up a new modern language will end up making me a better Rust programmer since I will be exposed to even more programming ideas and approaches.

-

Getting started on adding support for coalescing freed bins to rectangle-pack. This will power runtime deallocation within Akigi.

Next Journal Entry

By the next journal entry I plan to finish implementing runtime texture allocation and deallocation, and be a good way through removing my existing compile time texture atlas code in favor of instead preparing the textures to be runtime allocated.

Well Wishes,

- CFN

115 - Slow, Again

April 18, 2021

Short week again this week as I work on closing on a software licensing deal for a separate piece of software that I am working on.

I'm still working on the runtime texture allocator. I honestly haven't made very much progress on Akigi over the last two months, otherwise this should have been done by now.

Now that I've sent out the contract for the software licensing deal I can turn my focus back to making progress here.

In general I need to figure out how to better balance Akigi progress with other forms of work that pay the bills at the moment. I'll keep trying new things and improving on this front.

Other Notes / Progress

- I released dipa this week and it looks like other people found it useful as well.

Next Journal Entry

Yet again, finish the runtime texture allocator.

Well Wishes,

- CFN

114 - Dipa Released

April 11, 2021

This week I'm releasing dipa, a framework for efficiently delta encoding large Rust data structures.

I've been working on the library for the last few weeks. I plan to make use of it in Akigi so I consider it to be work for the game, but it isn't the most interesting thing to write in a game development focused journal.

Now that it's released I'm going back to what I was working on before I decided to get it finished.

Namely, I'll be finishing the new runtime texture allocator and then making use of it in order to start rendering icons for game items.

Other Notes / Progress

- dipa took my procedural macro writing skills to the next level. I'm excited to keep learning more about writing macros in the years to come.

Next Journal Entry

Runtime texture allocator. And hopefully I'll have some screenshots of the game to share since it's been a while.

Well Wishes,

- CFN

113 - Delta Encoding

April 4, 2021

For the last couple of weeks I've been working on a library that I plan to use for delta encoding state changes in Akigi.

I should have it done in the next week or week and a half, and when it's ready I will be open sourcing it.

I'm excited to announce and share it. It'll be cool if other people find use out of it, but we'll see.

I'll talk more about it and share a link to it once its ready.

Then we can get back to finishing the run time texture allocator and then get back to working on the crafting interface.

Other Notes / Progress

- Excited to finish and share the library.

Monthly Finances

The finances for March 2021 were:

| item | cost / earning |

|---|---|

| revenue | + $4.99 |

| Stripe fees | - $0.44 |

| aws | - $199.46 |

| adobe substance | - $19.90 |

| GitHub | - $9.00 |

| adobe photoshop | - $10.65 |

| --- | --- |

| total | - $234.46 |

Next Journal Entry

I'll be finishing up the delta encoding library. Then after that I can get back to Akigi gameplay work.

I will be back next week. Goodbye for now.

- CFN

112 - Texture Allocator Progress

March 28, 2021

I started off this week working on building out the runtime texture allocator.

I made great progress on this front and have a basic allocator working. I'm in the middle of refactoring parts of Akigi to start making use of the new allocator.

During the last half of the week I took a break from working on the allocator and spent time working on a library that I'm planning to open source soon.

I think that the library will be a game changer for applications that deal with synchronizing state between clients and servers, so I'm very excited to get it working and released. More details to come.

Other Notes / Progress

- I've been in procedural macro land for a few days while working on this new library. I'm much more comfortable with writing proc macros than I was back in 2018 or so when I wrote Percy.

Next Journal Entry

I'm going to spend a bit more time working on that open source library that I plan to publish in the coming weeks.

I'll also be spending more time getting the texture allocator working in the game so that I can move on to working on game play again.

We'll get there,

- CFN

112 - Texture Allocator Progress

March 28, 2021

I started off this week working on building out the runtime texture allocator.

I made great progress on this front and have a basic allocator working. I'm in the middle of refactoring parts of Akigi to start making use of the new allocator.

During the last half of the week I took a break from working on the allocator and spent time working on a library that I'm planning to open source soon.

I think that the library will be a game changer for applications that deal with synchronizing state between clients and servers, so I'm very excited to get it working and released. More details to come.

Other Notes / Progress

- I've been in procedural macro land for a few days while working on this new library. I'm much more comfortable with writing proc macros than I was back in 2018 or so when I wrote Percy.

Next Journal Entry

I'm going to spend a bit more time working on that open source library that I plan to publish in the coming weeks.

I'll also be spending more time getting the texture allocator working in the game so that I can move on to working on game play again.

We'll get there,

- CFN

111 - Focus

March 21, 2021

I have an interview on the 22nd that I've been preparing for.

I haven't touched Akigi since my last journal entry.

I'm going to need to reflect on what led to that. Akigi and the engine are such a large part of my life that it's weird to go a week without them.

Oh, I have a name for the engine now. It came to me during the week. A few months ago I thought I had a name, but it didn't end up sticking with me so I ditched it.

From now on I will be referring to the engine and editor by their new name:

Let Go.

Other Notes / Progress

I can't remember the last time that I didn't touch Akigi for a week so I feel a bit off on that front.

A minor set back for a major come back I suppose.

Next Journal Entry

The main thing to have in place by the next journal entry is a loose schedule for what I'm planning to deliver.

Any features that I build after that are a bonus. I'll be diving back into working on the run-time texture allocator.

I will be back next week. Goodbye for now.

- CFN

110 - Five Year Anniversary

March 14, 2021

Another anniversary. March 8th, 2021 marked five years since the first commit to the Akigi repository.

One of my main goals when starting Akigi was to work on a project that I was very enthusiastic about.

I wanted a project that was a never ending source of challenging technical problems.

So far, Akigi and the projects that it has spawned have lived up to that initial goal, and I don't see that slowing down over the next five years.

Another goal that I had was to have the game released and profitable. I haven't released Akigi yet, and so it certainly isn't profitable. I'm working to buck that trend, but it's a bit of a challenge to say the least. I'm working to get the game fun and live sooner rather than later.

Since Last Year

I built a lot over the last year and it was fun the entire way. A few things that stick out to me:

I introduced the game editor in 075. I remember going on runs last year excitedly working through thoughts on how to implement a terrain sculpting tool. Now it's alive and incrementally improving.

I implemented goal-oriented action planning in 061. That was a fun one since it was fairly complex and the end result of having NPCs making real time decisions felt cool and powerful.

Implementing additive animation blending in 098 was another fun one. I remember writing my first skeletal animation system back in 2016. Comparing that to the much more powerful system that I have today reminds me of how much I've learned.

Five Years from Now

I'm not sure where the project will be in five years, but there are a few things on my mind that would be cool to see.

I want the renderer to be industry grade. All of the modern graphics effects should be possible in the engine.

I've also been thinking about licensing the engine out to other web-focused companies.

Right now the game editor is still fledgling. I want it to continue to mature over the next five years.

I also want to have a great cross platform story, including iOS and android.

Mainly, I think that a Rust-based industry-grade game engine with an excellent web story would bring something new to the table. The next five years should move me closer to that dream.

Other Notes / Progress

I've been swamped with various other responsibilities lately and my Akigi work has taken a temporary hit.

I should be back in gear at the beginning of April. In the meantime progress will be a bit slower than usual.

Next Journal Entry

If I can get the runtime texture allocator live by the next journal entry I'll call that a win.

I will be back next week. Goodbye for now.

- CFN

109 - Editor Undo Redo

March 7, 2021

This week I got undo and redo working in the editor.

This was important to get in place sooner rather than later so that I didn't build up a large number of edit operations that I would later have to implement undo operations for after the fact.

I'm happy with how the undo redo architecture turned out and continue to remain excited for the long term potential of the editor.

Runtime Texture Allocator

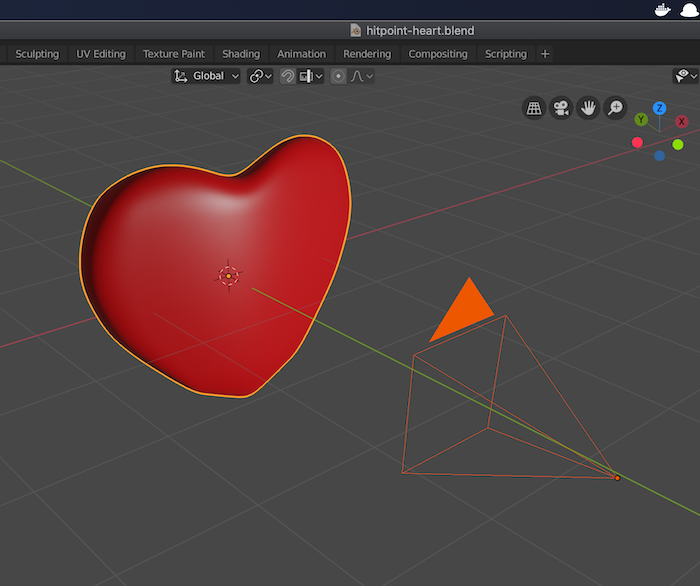

The ak dist game-asset CLI command prepares all of the game's assets. This includes work such as exporting meshes from Blender

or rendering icons for items.

Part of this asset preparation process assembles the game's textures into one of several texture atlases that the game later downloads at run time.

This is a flawed approach since in order to get access to one texture you have to download an entire atlas that might contain textures that you don't currently need. Texture memory is a finite resource, so this waste can show itself quickly, especially on mobile devices.

I'm moving towards a different approach where individual textures are downloaded as needed at run-time and inserted into GPU textures in a location determined by a CPU side runtime texture allocator.

For now this allocator is just wrapping rectangle-pack, but in the future I can imagine having an enum of a few different allocators that I can choose from based on the different trade-offs.

This is a work in progress. I don't plan to implement de-allocation until some future time, so I should be able to slot rectangle-pack

in as it exists today. In the future, though, I'll need to give rectangle-pack support for re-introducing free space back into a bin so

that it can be used to handle de-allocations.

Other Notes / Progress

Switched to using a g4ad 4x large on demand EC2 instance to power the linux portion of my GitHub Actions CI.

This is because the asset compilation process now uses wgpu-rs in CI when generating icons for textures, which means that I needed the CI instance to have a GPU.

wgpu-rs is using Vulkan under the hood on Linux, so I had to fiddle a bit with installing Vulkan drivers.

I also needed to ditch Docker in CI since I couldn't find a way to interface with the host machine's AMD GPU from within a Docker instance.

I keep the instance shutdown when I am not using it since it costs over $300/month to leave online at all times.

Since it's almost always off I should only end up paying around $5/mo or less for it.

Monthly Finances

The finances for February 2021 were:

| item | cost / earning |

|---|---|

| revenue | + $4.99 |

| Stripe fees | - $0.44 |

| aws | - $234.66 |

| adobe substance | - $19.90 |

| GitHub | - $9.00 |

| adobe photoshop | - $10.65 |

| adobe illustrator cancellation fee | - $44.76 |

| Datadog Inc | - $7.87 |

| --- | --- |

| total | - $322.29 |

Next Journal Entry

March 8th if the five year anniversary of the first commit to the Akigi codebase.

I'll be reflecting on that in next week's journal entry.

I will be back next week. Goodbye for now.

- CFN

108 - In Need of a Deadline

February 28, 2021

I did not get a ton of work done this week, so not much to report on.

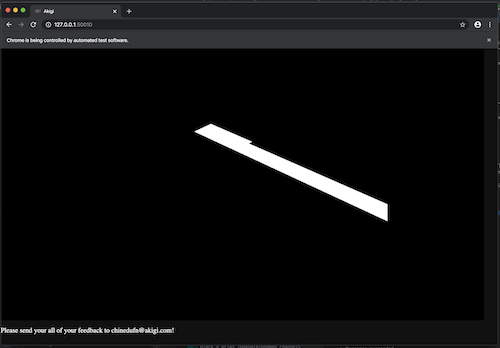

I abstracted out the code for rendering meshes and have started leveraging it in the item icon generation plugin.

Right now I am not rendering any meshes and am instead just rendering a clear canvas. This week I will work on rendering real meshes in order to generate the item's icon.

Other Notes / Progress

- Moved away from

typetagand for now I will be maintaining an enum for serializing and de-serializing asset variants.

Next Journal Entry

The first thing to get done this week is to start rendering real meshes in the item icon generator.

I also need to get back to finishing up the undo/redo feature in the editor.

Most importantly, I will be taking time to break down what I need to do to have a playable game live.

I need to figure out a deadline for a release. After which I can continue to improve and add new features.

I will be back next week. Goodbye for now.

- CFN

108 - In Need of a Deadline

February 28, 2021

I did not get a ton of work done this week, so not much to report on.

I abstracted out the code for rendering meshes and have started leveraging it in the item icon generation plugin.

Right now I am not rendering any meshes and am instead just rendering a clear canvas. This week I will work on rendering real meshes in order to generate the item's icon.

Other Notes / Progress

- Moved away from

typetagand for now I will be maintaining an enum for serializing and de-serializing asset variants.

Next Journal Entry

The first thing to get done this week is to start rendering real meshes in the item icon generator.

I also need to get back to finishing up the undo/redo feature in the editor.

Most importantly, I will be taking time to break down what I need to do to have a playable game live.

I need to figure out a deadline for a release. After which I can continue to improve and add new features.

I will be back next week. Goodbye for now.

- CFN

107 - Item Icon Plugin

February 21, 2021

This passed week I worked on an asset compilation plugin that iterates over all of the

items in the items.yml definition file and generates icons for them.

A big part of this workstream is refactoring out code from the game-app crate into a more general

purpose crate so that the item-icon-plugin, as well as other future plugins, can easily render

things.

Along the way I began to port the game-app crate to the new approach to loading and storing assets.

In short, most of the work here is to refactor and move existing logic until it is easily re-usable from anywhere.

Other Notes / Progress

- The new asset loading process relies on typetag, but

typetagdoes not work when targeting WebAssembly. So I will need to move away from it.

Next Journal Entry

I hope to finish the item icon generation asset compilation plugin by the next journal entry.

After that I will make some changes to how textures are loaded and buffered at run-time in order to set a foundation for being able to load and unload individual textures on the fly.

Currently all textures are packed into assets during asset compilation. This means that you have to download a bunch of textures that you are not using.

After my changes texture atlases will be created at run-time with only the textures that you need.

I will be back next week. Goodbye for now.

- CFN

106 - Crafting UI Early Peek

February 14, 2021

Since the last journal entry I continued to make progress on the crafting interface.

I also started working on supporting undo and redo in the editor.

Crafting UI

Because the crafting UI is the first complex interface in the game, building it is requiring me to lay a good bit of UI foundation.

This passed week continued along that trend.

I introduced a UiComponent trait as well as a UiButton to make it easier to make buttons.

/// A component that can be converted into UIElement's to be pushed to a UserInterfaceResource.

pub trait UiComponent<UiId: Hash, N, I: KeyboardInputNormalizer<N>, TextAreaId> {

/// Create UIElement's from this component and push them to the UserInterfaceResource.

fn push_ui_elements(

self,

user_interface: &mut UserInterfaceResource<UiId, N, I, TextAreaId>,

viewport_id: ViewportId,

);

}

Over time I will continue to add more components.

I also made improvements to the GridLayout type to make certain layouts easier, such as laying out

items in a row.

The crafting interface still needs textures as well as some positioning cleanup and functionality such as dragging and dropping ingredients in between ingredient slots. So there is more work to be done.

Item Icon Generation

I started working on an asset compilation plugin that can generate icons for every item in the game.

I mentioned a need for this around a month and a half ago in 099.

Once this works every item in the game will have an icon.

This will make it much easier to know what ingredients you are attempting to use while crafting.

Editor Undo Redo

I spent some time early in the week thinking through the design of the editor's undo/redo system.

One interesting case that I needed to design for was undoing a brush stroke.

If a user is in terrain sculpting mode and clicks and drags their mouse, multiple edits are applied.

If they press undo they should go back to the moment before they first pressed down the mouse.

This meant that undo could not simply undo the last edit. It needed to be possible to go back to some state from multiple edits ago.

I ended up landing on a design where when we are pushing a new UndoDescriptor to the undo stack I use a

match statement to determine whether or not to simply combine the new descriptor with the one at the top of the

stack.

This allows me to have one big UndoDescriptor to be able to undo multiple terrain sculpting edits.

impl UndoRedo {

/// Push an undo descriptor onto the undo stack.

///

/// If the stack is full the item at the bottom of the stack will be removed.

pub fn push_undo_desc(&mut self, undo_desc: UndoDesc) {

if self.undo_stack.len() == UNDO_STACK_MAX_SIZE {

self.undo_stack.remove(0);

}

if let Some(stack_top) = self.undo_stack.last_mut() {

match (stack_top, undo_desc) {

(

UndoDesc::TerrainDisplacement(stack_top),

UndoDesc::TerrainDisplacement(incoming),

) if stack_top.stroke_id() == incoming.stroke_id() => {

stack_top.combine_with(incoming);

}

(_, undo_desc) => {

self.undo_stack.push(undo_desc);

}

};

} else {

self.undo_stack.push(undo_desc);

}

}

}

I still need to create UndoDescriptor's for all of the existing edit operations that were created before I introduced'

undo/redo. I plan to take care of that this week.

Other Notes / Progress

-

Canceled my Adobe Illustrator subscription since I have not touched it in months. Had to pay a $41 cancellation fee.

-

Planning to start using a

g3 4x largeEC2 instance for CI jobs since once I have the item icon generation working I will need access to a GPU when compiling assets. At over $1/hr ag3 4x largeis too expensive for me to leave running at all times, so I will start it up whenever I need to run CI and set up a CloudWatch alarm to have it shutdown whenever it has been idle for 15 minutes.

Next Journal Entry

My focus this week will be getting the item icon generation asset compilation plugin working.

After that I will set up a self hosted GitHub actions runner on an EC2 instance that has a GPU. I'm expecting to fiddle a bit with installing Vulkan drivers.

If I get all of that working this week I will get started on implementing drag and dropping items into crafting ingredient slots.

I will be back next week. Goodbye for now.

- CFN

105 - Slow Week

February 7, 2021

This week I worked on the crafting user interface. The buttons are technically working, but the interface is just quickly thrown in and needs to be cleaned up.

I should have had it all cleaned by this journal entry, but I did not get as much work done last week as usual.

I made a dating app account and spent quite a bit of time on it, which took away from time that is usually spent focusing on Akigi.

Thankfully I think that I've re-gained control and don't feel as much of an impulsive urge to check and use the app.

So I should be getting a normal amount of work done by the next journal entry.

Other Notes / Progress

- Some ergonomic improvements to the engine's user interface crate. Quite a ways away from having something that feels seamless, but I'll get there over time.

January Finances

The finances for January 2021 were:

| item | cost / earning |

|---|---|

| revenue | + $4.99 |

| Stripe fees | - $0.44 |

| aws | - $225.79 |

| adobe substance | - $19.90 |

| GitHub | - $9.00 |

| adobe photoshop | - $10.65 |

| adobe illustrator | - $22.38 |

| Datadog Pro | - $8.12 |

| MacBook Pro | - $3,899 |

| --- | --- |

| total | - $4190.29 |

Next Journal Entry

For the next journal entry I will be focused on getting the crafting interface cleaned up.

I'll also continue to work on the game editor.

Some of the upcoming features are undo/redo, some enhancements to terrain editing, and introducing the ability to edit tiles so that I can control which tiles can be walked on.

I will be back next week. Goodbye for now.

- CFN

104 - More Crafting Progress

January 31, 2021

This week I finished implementing the code for being able to craft all of the intermediary items that go into crafting bows.

It is now very easy to add new items and crafting recipes into the game.

Next I need to create the models and textures for all of the new items, as well as the user interface for crafting.

There is a fair chunk of UI work to do, but as usual most of it is foundation work that will make future UI work easier.

Editor Improvements

I added the ability to delete scenery from within the editor by pressing x followed by Return.

Other Notes / Progress

Slowly but surely it is getting easier to add in new game play.

The inflection point is near.

Next Journal Entry

The top priority for this week is to work on adding the crafting interface into the game.

I will also be implementing Undo/Redo in the editor.

I will be back next week. Goodbye for now.

- CFN

104 - More Crafting Progress

January 31, 2021

This week I finished implementing the code for being able to craft all of the intermediary items that go into crafting bows.

It is now very easy to add new items and crafting recipes into the game.

Next I need to create the models and textures for all of the new items, as well as the user interface for crafting.

There is a fair chunk of UI work to do, but as usual most of it is foundation work that will make future UI work easier.

Editor Improvements

I added the ability to delete scenery from within the editor by pressing x followed by Return.

Other Notes / Progress

Slowly but surely it is getting easier to add in new game play.

The inflection point is near.

Next Journal Entry

The top priority for this week is to work on adding the crafting interface into the game.

I will also be implementing Undo/Redo in the editor.

I will be back next week. Goodbye for now.

- CFN

103 - Editor Placement Previews

January 24, 2021

I got back into routine this week after spending the end of last week and the first couple days of this week at my sister's house.

No big milestone this week, just steady progress on the important fronts.

Crafting Improvements

I'm making strides in the crafting system. All of the progress is still on the data structures, functions, unit and integration tests side of things so there isn't anything visual for me to share yet.

My approach to adding a new feature such as the crafting system is to first create an integration test for it where I simulate one or more players engaging with the gameplay.

I then bounce between adding an assertion to the integration test to implementing and unit testing all of the required new functionality to power that line.

This approach has been instrumental for me in a number of ways.

One example is that it gives me clear direction on exactly what needs to be built to support real game play.

Without a gameplay oriented integration test it can be difficult to know what functionality to focus on now as opposed to functionality that I can circle back to later.

For example, there are multiple unimplemented match expression branches within the function that checks to see if you meet the criteria for a recipe

because I know that none of the things that you are currently able to craft touch those code paths.

When those code paths get hit by real crafting recipes, I will implement them.

Here's a snippet of the integration test for crafting a bow thus far:

// ... snippet ...

#[test]

fn bowcraft_craft_bow() -> TestResult {

let game_thread = GameThread::new_with_game_server_config_modifier(

client_comps_source_new_player_at_pos(PLAYER_ID_1, PLAYER_POS),

|config| {

config.initial_entities = Some(InitialEntitiesConfig::new(initial_spawns()));

},

);

let player = game_thread.connect_player_tick_until_in_world(PLAYER_ID_1)?;

make_sharp_stone(&player, &game_thread)?;

make_twisted_sinew(&player, &game_thread)?;

make_sticky(&player, &game_thread)?;

todo!();

game_thread.shutdown()

}

// ... snippet ...

fn make_sticky(player: &ConnectedPlayer, game_thread: &GameThread) -> TestResult {

make_clay_bowl(player, game_thread)?;

harvest_acacia_tree_sap(player, game_thread)?;

player.pickup_entity(EntLookup::DisplayNameOne("Clay"));

player.tick_until_has_item_with_quantity(ItemId::Clay, 1, &game_thread, 10);

make_wet_clay(player, game_thread)?;

let sticky_request = CraftingRequest::new(

CraftingAction::Mix,

&[ItemId::BowlOfAcaciaTreeSap, ItemId::WetClay],

);

player.send_crafting_request_wait_for_ack(sticky_request)?;

player.tick_until_has_item_with_quantity(ItemId::BowlOfSticky, 1, &game_thread, 10);

player.assert_has_item_with_id_and_quantity(ItemId::BowlOfAcaciaTreeSap, 0);

Ok(())

}

// ... snippet ...

This week I added support for stations. An entity can have a StationComponent that allows it to be used as a station during crafting.

For example, when you want to harden a clay bowl you need a station with a Fire {hotness: Tier} component with a hotness of at least Tier::Two.

One way to satisfy the requirement is by using a Fire Pit entity as a station, but any hot enough entity will do just fine.

Editor Improvements

Last week I simplified the editor's game pane by culling it down to two main modes, Play Mode and Edit Mode.

Within the Edit Mode there are the sub-modes of Object and Terrain, each geared to different editing activities.

While in GamePane -> Edit Mode -> Object Mode you are able to edit and place scenery and entities within the game world.

Towards the end of the week I added the ability to see a preview of what you are placing.

Renderables that the editor injects into the game's render descriptor can not be textured currently, so the previews are rendered as all red for now.

Networking Improvements

While I was at my sister's I took a break from my usual work and worked on different operations related tasks.

The last of these was making the rate that client updates were sent out more consistent, which ended up running over into a couple of days after I came back from my sister's house.

Previously the amount of time between updates could vary based on how long a game tick took to run.

Before this change, if the game ticks were 600ms each and one tick took 5ms to complete then the next tick took 305ms to complete client updates would be sent 900ms apart.

After this change all updates would be sent 600ms apart, regardless of how long the tick took to run.

The advantage of this change is that it will make latency compensation easier on the client side.

As long as the variance in your ping isn't above some very forgiving threshold, the client can dynamically adjust different interpolation speeds so that you don't notice variations in latency.

I have not started on latency compensation on the client side. I will need to implement that at some point.

Other Notes / Progress

- Bought a new laptop that will arrive in a couple of weeks. Going to use my current laptop for consulting work and my new laptop for working on Akigi. This was mainly motivated by the fact that I need to send my current laptop in to get the keyboard repaired and I don't want to go five days without a laptop. But, I don't plan to return the new one since I'm very interested in exploring how having multiple machines for different purposes can help to boost my focus. When I some day end consulting I may dedicate the older machine to art or sound or something else.

Next Journal Entry

By the next journal entry I plan to have finished implementing the ability to craft a bow on the code and data side of things.

From there I will need to add in the user interface for crafting as well as new models for all of the new resources and raw materials that I have added, which I will start working on but will not be finished by the next journal entry.

I will also continue making progress on the editor. I plan to add the ability to move and delete scenery.

I also plan to fix an issue with terrain editing where painting terrain is not working at the edges of terrain chunks.

I will be back next week. Goodbye for now.

- CFN

102 - Brief Deviation

January 17, 2021

I was rocking and rolling during the first few days of the week, but on Thursday I went to visit my sister and I will not be back home until Tuesday.

During this travel time I decided to do performance and operations work since I find it easy to stay focused on that even when I am not in my normal routine.

When I am back home I will go back to working on game play features.

Crafting

I am still working on being able to harvest and craft all of the intermediary resources and items that go into making a bow.

As I go I am introducing the various data structures and logic that powers crafting, which is time consuming this first time around.

Adding new items to craft will be much easier once all of this foundation is in place.

Editor Improvements

I made it so that if you are in a game pane you can press tab to switch between playing and editing mode.

When you are in edit mode you can switch between sub modes such as terrain editing or object editing mode using the spacebar followed by a letter.

So Space -> T for terrain edit mode or Space -> O object edit mode.

Performance Fix

I performance profiled pathfinding while on the plane to my sister's state.

I fixed the performance regression that I introduced in 101.

The average time to complete a game server tick. Lower is better.

The average time to complete a game server tick. Lower is better.

Other Notes / Progress

- Moved from Datadog to an ELK stack running inside of my Kubernetes cluster. At some point I will look into Prometheus. I'm new to dev ops so I'm not sure when and how to use all of these tools. I'm learning as I go.

Next Journal Entry

I will be back to my regular routine on Wednesday at which point I will get back to working on the bow crafting system.

In the meantime I am working on a tweaking the way that players get updated with new game state.

Previously the time that updates were sent could vary based on how long the game tick took to run.

After this change they will always get sent at the same interval regardless of how long the tick took to run.

I will be back next week. Goodbye for now.

- CFN

101 - Deliberate

January 10, 2021

Every now and again I read a book that has an unworldly impact on how I think about my experiences in this life.

The latest to join this short list is Walden, by Thoreau. I am about half way through his book.

My mind has not stopped churning since the first page.

I am sure that the book has drawn a fair share of criticism. As most extreme choices and actions do. I can only imagine what would turn up if I entered "Walden critique" into the searching device.

For me though, the idea of living deliberately, as Henry did in the woods for a two year stretch, is inspiring.

Does having a drawer full of clothes add to, or take away from my life? Are my thoughts and writing wholly owned by me, or are the social norms of this era living rent free in my mind?

These are not rhetorical questions. I have a lot of thinking to do this year. What is my version of a deliberate life?

Making Akigi Slow

Earlier in the week I was struggling to focus. So I took a break from working on gameplay to work on a performance optimization.

I profiled. I benchmarked. I thought that I approached things well.

Oh no. That so called optimization that looked like a 20x speedup in my benchmark made the game server's roughly ten times slower in production.

Made the game server 10x slower. Oh my.

Made the game server 10x slower. Oh my.

This is the danger of micro-benchmarking. Next time around I will add a macro-benchmark or two, and see if I can diagnose what happened.

Crafting System

I spent quite a bit of time planning Akigi's crafting system and eventually landed on something that I liked.

Implementing it on the backend turned out to be easier than anticipated. I have not thought through the user interface for it yet.

Right now I am working on the ability to craft a bow. This is serving as a good test bed for implementing the underlying functionality that is needed for Akigi's crafting system.

Once this all works adding new things to craft will be as simple as adding new data to a YAML file.

Here's an example entry in that YAML file. My engine's crafting and criteria systems are generic across a few different aspects, making it easy to come up with and evolve a data format to power crafting.

- requirements:

action: Twist

ingredients:

Batch:

batch:

- Passes:

OnlyOne:

GteqQuantity: [StagSinew, 1]

- Passes:

IngredientCountExactly: 1

pass_requirement: All

consumed_items:

- item_id: StagSinew

quantity: 1

received_items:

- item_id: TwistedSinew

quantity: 1

As most Rust developers would expect, serde powers the de-serialization.

Other Notes / Progress

The crafting system is coming together really nicely. At least implementation wise.

When I finish implementing what I need and adding in more crafting recipe definitions I will need to create the user interface for crafting.

Then I can play with it to see how fun it feels.

I'm feeling very excited because when the crafting system is in place players can make things to their hearts content.

I feel so close to the inflection point where I have a real, playable game and can start to iterate on taking it from from okay to good to great to amazing.

Feelings can be wrong. That I know. But the feeling is there. And it makes me feel good.

Next Journal Entry

I'm going to keep pushing forwards on the crafting system. At some point this month I should be able to show you some visual progress. For now I'm deep in the land of data structures and writing test cases.

I will be back next week. Goodbye for now.

- CFN

100 - Motivated

January 03, 2021

Hello, and welcome to the start of another year.

At the beginning of last year I had high hopes. I thought that I would have a fun alpha version of Akigi ready in April 2020.

These dreams ended up being far from reality.

It was not until the end of October that I even started to work on player facing functionality consistently.

And even still today most of my time is spent setting the underlying engine foundation for whatever gameplay I am working on at the time.

This is what I signed up for by choosing to make my own engine for Akigi.

Fortunately, I don't at all regret taking this long route to the destination. I'm very happy with the trajectory of my engine and I know that there will be an inflection point where I begin to move with top pace.

When will that be? I'm not entirely sure.

I made a list in 099 of the things that I thought were left before I could move at the pace that I want to.

But because I have never released a game before, there are bound to be additional major speed blockers that I notice between now and when that list of tooling is completed.

So, all I am doing for now is sticking to my script. Focus on putting out more game play, and let everything else fall into place around that.

I'd like for 2021 to go differently. I think that it will, but I am admittedly hesitant to say that since I have said it before and I was wrong.

I feel like I am close to the inflection point. I feel like I am nearing the moment where I can start to add in fun game play every week. With larger new updates every month. I feel close, but I am scared to say it because I have said it before and been wrong.

I think that Akigi will be a special game. Not to all, but to some. I have so many gameplay ideas that I want to implement. Some will strike a chord, others might flop.

I'm still motivated. I'm still hungry. I still have the utmost faith in my codebase to help me push Akigi to where it needs to be. I still know that with continued practice my art will be unique to me and enjoyed by some.

I will just keep pushing, and when the light at the end of the tunnel becomes unmistakable I will announce the first official version of Akigi.

It will all work out in the end. That I am sure of.

Creating Bows and Arrows

As mentioned in the last journal entry, I've begun work on being able to create bows and arrows.

I spent a solid amount of time planning out how crafting in Akigi will work.

I'm very happy with how I approached this.

I did not just sit down and try to come up with functionality out of thin air. Instead, I started from the feelings and emotions and thoughts that I wanted the crafting system to create, and then from there I landed on how it should work.

Now, whether I will successfully deliver on my goals here is another story, but I'm happy with the plan that I have in place.

Because this is the first real introduction of crafting into the game, there will be some time that I need to spend adding in various foundational implementations for crafting.

As such, I'm expecting to spend January on the crafting of bows and arrows.

After that, however, it should be much easier to add in new crafting experiences.

This journal entry is getting a bit long, so I'll write about what my plans are for crafting in Akigi in the next journal entry.

Auto Generating Item Data Structures

Crafting bows and arrows in Akigi involves a collecting and creating a number of intermediary items before you assemble your final product.

Before this week anytime I needed to add a new item to the game I needed to adjust a number of data structures and methods.

For example, there's a c-like ItemId enum with different methods such as .equipment_id() -> Option<EquipmentId> which returns the corresponding equipment id

for an item, or None if it is not equippable.

Needing to update a dozen or so different parts of the codebase every time I added an item made me not want to add in new items.

This week I added an items.yml file where I can define items, and then wrote a build script that automatically generates all of the data structures and methods

that I need.

# Example item definitions

BeastCage:

id: 26

display_name: "Beast Cage"

ground_renderable_id: BeastCageNotBent

ButchersKnife:

id: 28

display_name: "Butcher's Knife"

ground_renderable_id: ButchersKnife

equippable:

renderable_id: ButchersKnife

slot: MainHand

WebGPU Renderer

Last week I mentioned that one of the key missing tools that I need is the ability to auto generate icons for items.

The idea would be to iterate over all of the item's in the game and use the engine's Renderer trait to render the item and

save the rendering to a PNG file.

Both my linux and mac CI servers run the full asset compilation process.

As it stands now I have two implementations of the Renderer trait, the MetalRenderer and the WebGlRenderer.

Neither can be used to power linux CI.

The WebGlRenderer runs in the browser so getting PNGs out of there would be a hassle. The MetalRenderer only works on macOS, so that is also ruled out.

For this reason I started working on a third Renderer implementation, the WebGpuRenderer.

I do plan to have a VulkanRenderer in the future that I will use on Linux and Windows, but for now the WebGpuRenderer is higher priority since I can use it in the browser to

replace the WebGlRenderer whenever the major browsers support WebGPU.

My linux CI server is an EC2 instance. I will need a linux server that has a GPU whenever I make the asset compilation process auto generating icon.

So, I'll be buying a refurbished cheap machine and loading linux on it as a replacement for the EC2 instance.

This should cut $40/month or so off of my AWS bill.

Other Notes / Progress

-

Fixed logic issue where attacks did not cool down while you were out of combat.

-

Fixed the lower body walk animation stuttering if you were walking while attacking.

December's Finances

The finances for December 2020 were:

| item | cost / earning |

|---|---|

| revenue | + $4.99 |

| Stripe fees | - $0.44 |

| aws | - $240.26 |

| adobe substance | - $19.90 |

| GitHub | - $9.00 |

| adobe photoshop | - $10.65 |

| adobe illustrator | - $22.38 |

| Datadog | - $8.12 |

| --- | --- |

| total | - $305.76 |

Next Journal Entry

I'm going to continue working on being able to craft bows and arrows.

The amount of art that I do per week has started to slow down, so I'm going to think about why that is and make some adjustments to my process.

Cya next time!

- CFN

099 - More Gameplay, More Quickly

December 27, 2020

This week I fixed a long standing issue with how skeletal animation worked within my engine.

Previously, my script that exports bone data from Blender would export the transforms in world space and did not export the bone hierarchy.

This was fine if you only ever played one animation on a character at a time, and if you didn't modify any bones at runtime.

But if, for example, the lower body was crouching and the upper body was punching, the upper body would not move downwards along with the lower body since none of the bones were parented to each other.

Similarly, an additive animation that bent the torso wouldn't transform any of its descendants in the bone hierarchy.

I now export bones keyframes from Blender in their local spaces, along with a bone hierarchy that points to the parent of each bone.

The engine's skeletal animation system uses the local space transforms and bone relationships in order to calculate each bone's world transformation at runtime.

This fixes issues such as separate upper and lower body animations creating strange deformations.

The upper body playing a fire bow animation while the lower body plays a walk animation.

The upper body playing a fire bow animation while the lower body plays a walk animation.

Aiming

This week I introduced the ability to aim at a different area depending on the enemy.

Here's an example of aiming at a snail on the ground vs. a flying swarm of moquitos.

This boiled down to re-using the existing engine support for defining points on renderables, and then adding some support for being able to aim at one of these defined points.

Human Rig

If you look carefully in the video above, you'll notice the arms moving weirdly at around the 8 second mark as the player is moving while firing a bow.

This is due to the fact that the Rigify rig that I am using for the human does not have proper bone relationships set up between the deformation bones.

I've started working on my own rig in order to address some of these issues. I've even made my first IK-FK switch using Blender drivers.

I'm having fun with this.

When it's done I plan to at some point write a Blender addon to automate IK-FK snapping in a way that makes it re-usable for any armature.

So far I've only experimented with writing a basic Blender addon in Rust just to see if it was possible.

I'm expecting this to be my first serious Blender addon that I write in Rust.

Adding More Gameplay More Quickly

My mindset right now is to stay patient from week to week as I add in new functionality.

My mindset is that I am carefully setting a foundation now for being able to release gameplay features at break neck speed later.

Over the last two months of journal entries, almost everything that I wrote about while working on making the Bowman experience better were

general purpose engine features that I needed to write. in order to power new gameplay

That is a big cost, but it will pay for itself as I add more game play and re-use these systems that took so much up front work.

With features such as separate animations for different bone groups, aiming via additive animation and attaching equipment and other objects to characters, the functionality around skeletons is at a place where I can easily do quite a bit.

There is still more skeletal animation related functionality that I will want over time, and a few broken aspects of animation that I need to patch, but by and large I am in a good position when it comes to things related to skeletons.

There are a few other key areas that I think need to fall into place before I can truly prototype and release fun game play at a pace that I am happy with.

-

Editor - Easy place and move and edit entities and scenery.

-

Editor - Easy to shape and texture terrain in the editor.

-

Editor - Easy to edit the ways that one or many game tiles can be traversed within in the editor.

- This has not been started.

-

Asset Compilation - Automatically generating icons for all game items by rendering them to PNG files during asset compilation.

- This has not been started.

-

Gameplay Systems - A general purpose crafting implementation that I can then leverage in different ways in order to power different skills under the hood.

- I have an existing simple crafting system, but this will likely need to be built from the ground up based on what I have in mind functionality wise.

-

Gameplay Systems - Implementing dialogue and cut scenes support more robustly using things that I learned while implementing the coordinated sequence system in 092.

- This will take elbow grease, but it should be more of a re-organization of existing concepts as opposed to a complete re-write.

I do not plan to work on all of these at once. I don't even plan to make any of them a main focus.

I am committed to having my focus from week to week be on improving the Akigi game play experience. Adding more and more fun things to do.

Any engine or tooling improvements that I work on from week to week need to tie back to the specific gameplay feature that I am working on at the time.

So, for example, when I'm looking to add in the models for the first village in the game I will in turn spend time improving the editor user experience for placing scenery.

By focusing on gameplay instead of tooling I will avoid the pitfall of getting blinded by my addiction of building tools and thereby delaying the day that I look forward to where I can release Akigi to excited players and see it become a sustainable project that I can focus on improving for them full time.

Other Notes / Progress

I continue to get more comfortable in the art realm as I practice daily. It will be nice to look back in a year or two or three as my skills grow.

Next Journal Entry

If you're attacking while moving your lower body walk animation stutters. I know the fix for that and it should take a couple of hours.

I also need to move the pose marker that controls when the bow recoils when you fire it, because it currently recoils too early.

Earlier in the month I mentioned having some ideas around a Voodoo skill. They are still on my mind, but I'm going to hold off on prototyping them.

I want to continue improving the experience around Bowman until it becomes an aspect of the game that feels fun and worth playing on its own.

Note that making the Bowman experience better will naturally lead me to introducing and improving other aspects of the game and its engine.

For example, this week I want to start working on ways for players to make a bow and arrow in Akigi. This will lead to the introduction of a couple of new skills.

Those skills will lead to the introduction of new systems and engine features that will make it easier to make and improve Akigi.

When this approach of focusing on trying to improve Bowman begins to give diminishing returns,

I will turn my attention towards another aspect of the game.

Over time this approach should lead to more and more aspects of Akigi feeling fun enough to engage with on their own.

And by having everything that I work on tie back to some driving focus, it should help to make sure that different aspects of the game feel consistent and connected.

I will also be working on a new human mesh and armature now that I am ditching Rigify.

Technically making new meshes isn't necessary as I only need a new rig, but I want to benchmark myself artistically and this feels like a fun way for me to practice.

Last time I made the human model I followed along with a tutorial. This time I am planning to just keep a few reference images open but work in a more self directed fashion.

Cya next time!

- CFN

099 - More Gameplay, More Quickly

December 27, 2020

This week I fixed a long standing issue with how skeletal animation worked within my engine.

Previously, my script that exports bone data from Blender would export the transforms in world space and did not export the bone hierarchy.

This was fine if you only ever played one animation on a character at a time, and if you didn't modify any bones at runtime.

But if, for example, the lower body was crouching and the upper body was punching, the upper body would not move downwards along with the lower body since none of the bones were parented to each other.

Similarly, an additive animation that bent the torso wouldn't transform any of its descendants in the bone hierarchy.

I now export bones keyframes from Blender in their local spaces, along with a bone hierarchy that points to the parent of each bone.

The engine's skeletal animation system uses the local space transforms and bone relationships in order to calculate each bone's world transformation at runtime.

This fixes issues such as separate upper and lower body animations creating strange deformations.

The upper body playing a fire bow animation while the lower body plays a walk animation.

The upper body playing a fire bow animation while the lower body plays a walk animation.

Aiming

This week I introduced the ability to aim at a different area depending on the enemy.

Here's an example of aiming at a snail on the ground vs. a flying swarm of moquitos.

This boiled down to re-using the existing engine support for defining points on renderables, and then adding some support for being able to aim at one of these defined points.

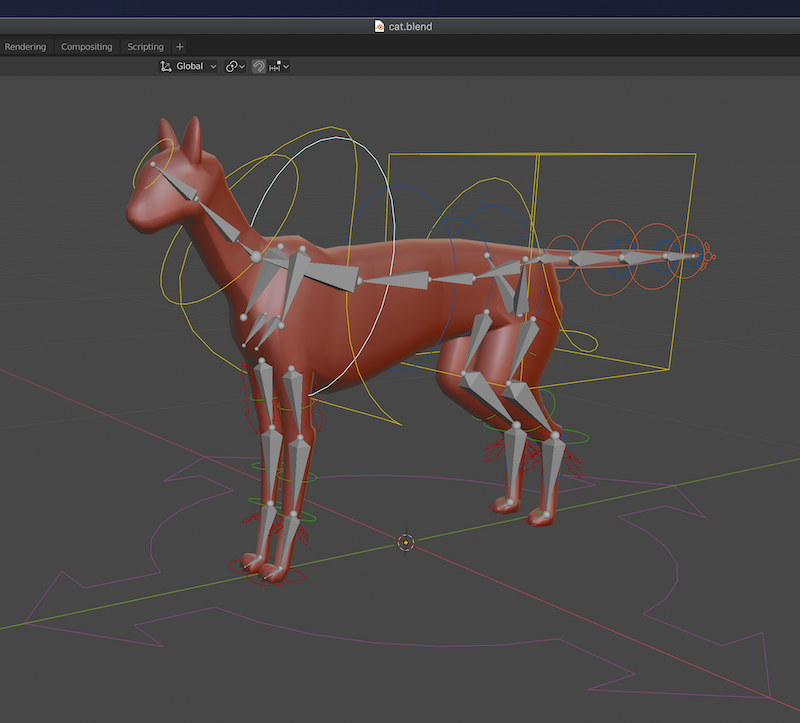

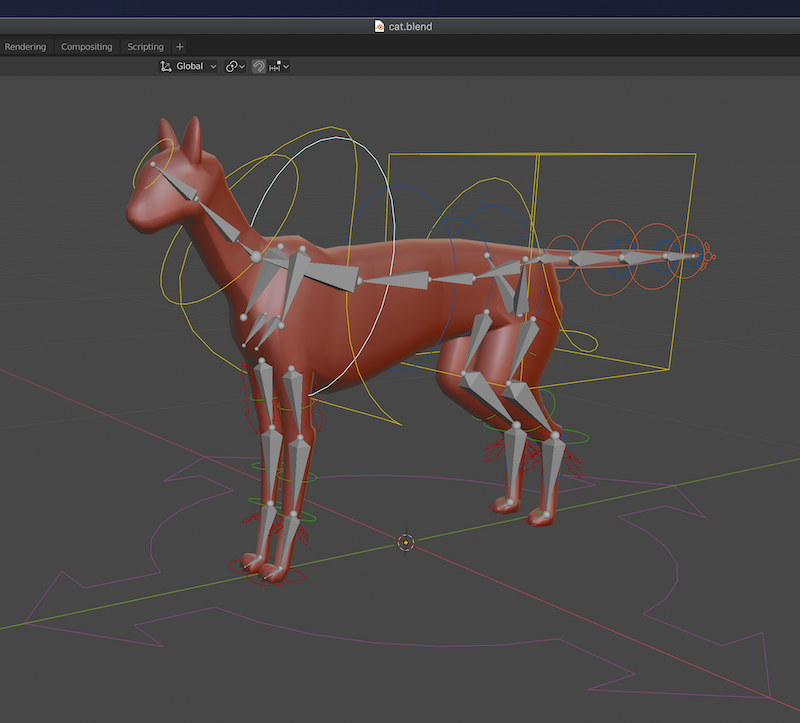

Human Rig

If you look carefully in the video above, you'll notice the arms moving weirdly at around the 8 second mark as the player is moving while firing a bow.

This is due to the fact that the Rigify rig that I am using for the human does not have proper bone relationships set up between the deformation bones.

I've started working on my own rig in order to address some of these issues. I've even made my first IK-FK switch using Blender drivers.

I'm having fun with this.

When it's done I plan to at some point write a Blender addon to automate IK-FK snapping in a way that makes it re-usable for any armature.

So far I've only experimented with writing a basic Blender addon in Rust just to see if it was possible.

I'm expecting this to be my first serious Blender addon that I write in Rust.

Adding More Gameplay More Quickly

My mindset right now is to stay patient from week to week as I add in new functionality.

My mindset is that I am carefully setting a foundation now for being able to release gameplay features at break neck speed later.

Over the last two months of journal entries, almost everything that I wrote about while working on making the Bowman experience better were